The AiGILE Blog

Browse our latest articles

Every week, this blog writes itself, posts to LinkedIn, and lands in your inbox. We thought you might want to know how.

We automated our own content marketing using AI. Here's exactly what we built and what it cost.

There's a certain irony in an AI consultancy manually writing blog posts, scheduling social media, and sending newsletters. We noticed it too. So we built a system to handle most of it automatically — and then we thought, what better way to show what's possible than to explain exactly how we did it.

This isn't a technical manual. It's a plain-English look at what we built, what tools we used, and why any business with a marketing function should at least understand what's now possible.

The problem we were solving

Consistent content marketing is one of those things that everyone agrees is important and almost nobody does well. Not because people lack ideas, but because they lack time. Writing a blog post, turning it into social media copy, designing a header image, sending a newsletter — done properly, that's a full day's work every week.

We wanted to produce genuinely useful content for Australian business leaders on a daily and weekly basis, without that content consuming our team. The result is what we call BRAiKING NEWS — a system that researches AI news, writes social posts, drafts blog posts, generates images, and distributes everything with almost no human intervention.

Almost. The human still reads it before it goes out. That matters, and we'll come back to it.

What the system does

Every morning at 6am, the system wakes up and scans the internet for AI news that's relevant to Australian businesses. It selects three stories, writes a two or three sentence summary of each, explains why it matters for business leaders, and queues them to post on LinkedIn and BlueSky throughout the day.

Once a week, it takes the most interesting of those stories and expands it into a full blog post — complete with a header image generated specifically for that topic. It then writes a newsletter combining the week's highlights and the blog link, creates a draft campaign ready to send, and posts to the AiGILE company LinkedIn page with the blog image attached.

Everything gets reviewed before it goes live. A human reads it, decides it's good enough, and presses send. That's the only step we haven't automated — deliberately.

The tools involved

You don't need to understand the technical details to appreciate the picture. Here's what's doing the work:

Make.com is the engine room. It's an automation platform that connects different software tools and tells them when to talk to each other. Think of it as the conductor — it doesn't make music itself, but nothing happens without it.

Claude by Anthropic does the writing. Every piece of copy — the social posts, the blog drafts, the newsletter, the LinkedIn content — is written by Claude based on instructions we've carefully crafted. Getting those instructions right is genuinely skilled work. The difference between a prompt that produces generic AI-sounding waffle and one that produces something you'd actually want to read is significant.

Google Gemini generates the blog header images. Each week it creates an original editorial-style photograph based on the topic of that week's blog. We gave it guidance on our brand colours, the tone we're after, and the kind of imagery that works for an Australian business audience. It takes about 30 seconds.

Google Sheets is the database. Every story researched, every post written, every blog drafted — it all lives in a spreadsheet that serves as the system's memory and control panel. It's where we review content before approving it.

MailerLite handles the newsletter. The system creates a fully formatted draft campaign, ready for us to review and send with one click.

LinkedIn and BlueSky receive the finished posts directly from the system, on schedule, without anyone manually copying and pasting.

The bit that took the most work

Writing the instructions for Claude was by far the most time-consuming part of the build. You can point Claude at a news story and ask it to write a LinkedIn post, and it will. But left to its own devices, it will use phrases like "game-changer" and "leverage" and "revolutionise" and produce something that sounds exactly like every other AI-generated content you've ever ignored.

Getting it to write in a specific voice — direct, commercially minded, occasionally dry, never using buzzwords — required a lot of iteration. We ended up with detailed guidance that covers not just what to write, but what never to say, how to open a post, and what the audience actually cares about.

That investment pays off every time the system runs.

Why this matters for your business

The specific system we built is designed for content marketing, but the underlying approach applies almost anywhere you have a repetitive process that involves finding information, making decisions about it, and producing an output.

Customer communications. Monthly reporting. Meeting summaries. Tender responses. Compliance checklists. The tools exist to automate significant portions of all of these — not to remove the human judgement, but to remove the manual labour that surrounds it.

The human review step we kept in our system isn't a failure of automation. It's a deliberate design decision. AI writes a first draft in seconds. A human decides if it's good enough in minutes. The total time invested is a fraction of what it was before — but the quality control stays in place.

What it cost to build

The software tools involved are not expensive. Make.com, Claude, Gemini, MailerLite — the combined monthly cost for a system like this is well under a few hundred dollars. The investment is in the setup: understanding what you want to automate, configuring the tools, and writing the instructions that produce the right output.

That's exactly the kind of work we do with clients. If something in this post sparked an idea about a process in your business that could run more efficiently, we'd enjoy the conversation.

This blog post was drafted by Claude, reviewed by a human, and published to Squarespace as part of the system described above. The header image was generated by Google Gemini. Total hands-on time to produce this post: about four minutes.

Not sure where your business stands with AI?

Find out your AiDOPTION Score — a free 10-minute diagnostic that measures your AI readiness across Strategy, Technology, and People. You'll get a personalised score and practical recommendations.

Your Employees Are Already Using AI – Are You Managing The Risk?

New research reveals 82% of business data shared with AI comes from unmanaged personal accounts, creating serious security vulnerabilities for Australian SMEs.

New research reveals 82% of business data shared with AI comes from unmanaged personal accounts, creating serious security vulnerabilities for Australian SMEs

Here's an uncomfortable truth: your staff are already using AI tools to get their work done faster. The question isn't whether they're using AI – it's whether you know about it, and whether you're protecting your business while they do it.

New research shows that 82% of data being fed into AI prompts comes from employees using personal accounts on free AI platforms. That means your client lists, proprietary processes, strategic plans, and yes, even source code, are potentially sitting on servers you don't control, governed by terms of service you haven't read.

For Australian businesses with 50-1000 employees, this isn't a hypothetical problem. It's happening right now, in your organisation, probably while you're reading this article.

The Shadow AI Problem

Shadow AI is exactly what it sounds like – AI usage that happens in the shadows, outside your IT policies and business oversight. Unlike shadow IT of the past (remember when marketing departments would quietly sign up for their own software subscriptions?), shadow AI carries a unique risk: every interaction potentially exposes sensitive business information.

When an employee copies a client proposal into ChatGPT to "make it sound more professional," or pastes financial data into Claude to create a summary report, they're inadvertently sharing confidential information with external platforms. The AI providers may use this data to train their models, store it for compliance purposes, or – in a worst-case scenario – suffer a data breach that exposes your information.

Why This Matters More for Mid-Sized Businesses

Large enterprises typically have robust IT governance frameworks that either block AI tools entirely or provide sanctioned alternatives. Small businesses might not handle enough sensitive data to create serious exposure. But mid-sized Australian businesses sit in a dangerous middle ground.

You have valuable intellectual property, client databases, and competitive information that could genuinely harm your business if exposed. But you might not yet have the IT infrastructure or policies to manage AI usage effectively. Your employees are sophisticated enough to find and use AI tools independently, but perhaps not trained enough to understand the security implications.

Consider this scenario: your sales manager uses a free AI tool to analyse competitor pricing data, inadvertently revealing your pricing strategy and client list. Or your marketing coordinator uploads client testimonials to an AI platform to create case studies, exposing client relationships and project details. These aren't malicious acts – they're productivity-focused employees trying to do better work.

The Australian Context

Australian businesses face particular challenges here. Our privacy laws are strict, and getting stricter. The Australian Privacy Act amendments coming into effect create serious penalties for data breaches – up to $50 million or 30% of turnover for the largest penalties.

If your employee accidentally shares client data through an unsanctioned AI tool, and that data is subsequently breached or misused, you're still responsible under Australian privacy law. "We didn't know they were using AI" isn't a defence that will satisfy the Privacy Commissioner.

Moving from Risk to Opportunity

The solution isn't to ban AI usage – that's both impractical and counterproductive. Your competitors' employees are using AI tools too, and if you force your team to work without them, you're voluntarily accepting a productivity disadvantage.

Instead, you need to get ahead of the curve with sanctioned AI tools and clear usage policies. This means:

Providing approved alternatives: Subscribe to business versions of AI tools that offer better data protection, usage controls, and compliance features. Google Workspace's Gemini features, Microsoft Copilot for Business, or other enterprise AI tools give you the productivity benefits with proper data governance.

Creating clear policies: Your staff need to understand what's acceptable and what isn't. A simple rule like "no client data in free AI tools" is easier to follow than a complex policy document they won't read.

Training your team: Help employees understand both the benefits and risks of AI tools. Most people want to do the right thing – they just need to know what that is.

Regular monitoring: You can't manage what you don't measure. Regular checks of your network traffic, software usage, and data handling practices will help you stay on top of shadow AI usage.

Taking Action

If this article has you worried about what your employees might already be sharing, you're not alone. The research suggests this is happening across most Australian businesses right now.

The good news is that addressing shadow AI doesn't require a massive technology overhaul. It requires a structured approach that balances productivity benefits with security requirements.

Start with an honest assessment of current AI usage in your organisation. Survey your team about what tools they're using and what business information they've shared. You might be surprised by the results, but you can't address risks you don't know about.

Then focus on providing better alternatives. Business-grade AI tools with proper data governance aren't significantly more expensive than the productivity losses from either shadow AI risks or forcing employees to work without AI assistance.

Your employees want to do great work efficiently. Your job is to give them the tools to do that safely. The alternative – pretending AI isn't being used in your business – is no longer realistic.

Not sure where your business stands with AI?

Find out your AiDOPTION Score — a free 10-minute diagnostic that measures your AI readiness across Strategy, Technology, and People. You'll get a personalised score and practical recommendations.

Is Your Data Ready for AI? The Foundation Most Businesses Skip

There's a phrase that gets used a lot in enterprise technology: "garbage in, garbage out." It predates AI by several decades. But it's never been more relevant than it is right now, because the quality of AI output is directly — and in many cases brutally — dependent on the quality of the data you're working with.

There's a phrase that gets used a lot in enterprise technology: "garbage in, garbage out." It predates AI by several decades. But it's never been more relevant than it is right now, because the quality of AI output is directly — and in many cases brutally — dependent on the quality of the data you're working with.

Most mid-sized businesses underestimate their data readiness challenges before starting AI initiatives. They invest in tools, discover the data problem mid-project, and either scale back their ambitions or spend significant additional resources fixing issues that could have been identified up front.

Why does data readiness matter before adopting AI?

AI systems — whether you're using a commercial platform or building custom capabilities — learn from data and operate on data. If that data is incomplete, inconsistent, poorly structured, or inaccessible, the AI's outputs will reflect those problems. A customer segmentation model trained on incomplete CRM data will produce unreliable segments. A predictive demand-planning tool fed inconsistent historical sales data will produce unreliable forecasts.

The cost of discovering data problems mid-project is significant. MIT research suggests that poor data quality costs US businesses around $3.1 trillion per year in wasted resources and missed opportunities. At the project level, data quality issues discovered after an AI initiative has started are typically 3–5 times more expensive to resolve than if they had been addressed upfront.

Data readiness assessment isn't a bureaucratic hurdle on the path to AI adoption. It's a commercial decision that protects your investment in what comes next.

What does "data ready" actually mean?

Data readiness has four dimensions, and a business needs to be in reasonable shape across all of them before an AI initiative can be expected to succeed.

Availability means the data you need exists, is digitised, and is accessible to the systems that will use it. Data locked in spreadsheets on individual laptops, stored in legacy systems with no API access, or existing only on paper, isn't available for AI use, regardless of its quality.

Quality means the data is accurate, complete, and consistent. Duplicate records, missing values, inconsistent formats (dates stored as text, addresses in different formats), and outdated information all degrade AI performance.

Governance means there is clear ownership, defined standards, and documented processes for how data is created, maintained, and used. Without governance, data quality degrades over time regardless of how clean it starts. This is also where privacy, security, and compliance considerations live.

Volume means there is sufficient data for the AI application you're building or using. Some AI capabilities require substantial historical data to perform reliably. Understanding the minimum data requirements for a specific AI use case is an important part of the feasibility assessment.

How do you assess your current data quality?

A basic data readiness assessment can be conducted quickly with a structured approach. For each major data domain that your AI initiative will rely on — customer data, product data, operational data, financial data — you assess across the four dimensions: Is it available? Is it of adequate quality? Is it governed? Is there enough of it?

The most revealing questions to ask are often the simple ones: Who owns this data? When was it last audited? What percentage of records are complete? Can we export it in a standard format? How many systems does it live in? If these questions produce uncertain or inconsistent answers, that's a signal that the data foundation needs work before AI initiatives are built on top of it.

For mid-sized businesses, a focused data assessment of the core domains relevant to a specific AI initiative typically takes 2–4 weeks and produces a clear picture of what's ready to use, what needs remediation, and what represents a longer-term investment.

What's the fastest way to improve data readiness?

The fastest path is to narrow the scope. Rather than attempting to fix all data quality issues across the entire business before starting AI initiatives, identify the specific data domains required for your highest-priority AI use cases and focus remediation there.

For most mid-sized businesses, this means: establishing clear data ownership (a named person responsible for each critical data domain); running a targeted data quality cleanse on the records that the AI initiative will actually use; implementing basic data governance rules that prevent the same issues from recurring; and ensuring the data is accessible to the AI platform in the required format.

This focused approach is more pragmatic than a wholesale data transformation programme, and it generates value faster. It also yields insights into your data management practices that inform the broader investment in data governance over time.

Frequently Asked Questions

Do we need to fix all our data before we can start with AI? No, and attempting to do so would unnecessarily delay AI adoption. The goal is "fit for purpose" data, not perfect data. For each AI use case, define the minimum data quality threshold required for acceptable performance, assess whether your current data meets that threshold, and remediate the specific gaps. You can run AI initiatives in parallel with broader data quality improvement programmes.

What data quality score is "good enough" for AI? There's no universal answer — it depends on the use case and the consequences of errors. An AI tool summarising internal meeting notes can tolerate more data imperfection than an AI model used in clinical decision-making. The right question is: what's the minimum quality level where the AI's output is reliably useful, and is our data at or above that level? If you can't answer this for your specific use case, that's the first thing to establish.

Who is responsible for data readiness in a mid-sized business? In most mid-sized businesses, data readiness is a shared responsibility that sits awkwardly among IT (who manage the systems), operations (who generate the data), and whoever leads the AI initiative. This ambiguity is itself a governance problem. Best practice is to establish a named "data owner" for each critical domain — typically a senior operational person, not an IT person — who is accountable for the quality of data in that area.

Not sure where your business stands with AI?

Find out your AiDOPTION Score — a free 10-minute diagnostic that measures your AI readiness across Strategy, Technology, and People. You'll get a personalised score and practical recommendations.

Why Employee-Led AI Adoption Outperforms Top-Down Mandates

There are two ways to approach AI adoption in a mid-sized business. The first is to have leadership define the AI strategy, select the tools, develop the policies, and then communicate the change down through the organisation. The second is to actively involve employees in identifying AI opportunities, developing solutions, and driving adoption from within.

There are two ways to approach AI adoption in a mid-sized business. The first is to have leadership define the AI strategy, select the tools, develop the policies, and then communicate the change down through the organisation. The second is to actively involve employees in identifying AI opportunities, developing solutions, and driving adoption from within.

Both approaches have a role to play. But the evidence — and the experience of businesses that have done this well — strongly suggests that organisations which enable and harness employee-driven adoption achieve significantly better results than those that rely primarily on top-down mandates.

What is employee-led AI adoption?

Employee-led AI adoption doesn't mean leaving the workforce to do whatever they want with AI tools — that's a governance problem, not a strategy. It means creating the conditions where employees are empowered to identify AI opportunities in their own work, experiment within a governed framework, and champion AI adoption within their teams.

The most visible mechanism for employee-led adoption is the AI Champion programme — a network of trained, enthusiastic employees who act as peer coaches and advocates within their teams. But employee-led adoption is broader than a single programme. It's a cultural orientation that treats employees as active participants in AI adoption rather than passive recipients of it.

Why do top-down AI mandates so often fail?

Top-down AI mandates fail for the same reasons that most top-down mandates fail: they underestimate the complexity of changing how people actually work, and they overestimate what authority alone can achieve.

A mandate can require people to attend training. It can't make them learn. It can require people to use a tool. It can't make them use it effectively or enthusiastically. The difference between compliance and genuine adoption is motivation — and motivation is generated by understanding, agency, and relevance, none of which are features of a mandate.

The other limitation of purely top-down approaches is that leadership typically doesn't have granular visibility into where the highest-value AI opportunities exist in day-to-day operations. The employees doing the work have that visibility. A purely top-down approach systematically underuses this intelligence, often resulting in AI investments that are strategically logical but operationally suboptimal.

How do you create the conditions for employee-led AI adoption?

Creating the conditions for employee-led adoption involves four elements working together.

Psychological safety means employees need to feel safe to experiment, ask questions, and make mistakes without fear of negative consequences. In environments where failure is punished, people don't experiment — and AI adoption without experimentation is extremely slow.

A clear governance framework gives employees the parameters within which they can experiment. What tools are approved? What data can be used? What's the process for trying something new? Without this, well-intentioned employee experimentation risks generating the shadow AI problems discussed in a previous post.

Time and resources — even modest ones — signal that the organisation is serious about AI adoption and that employee engagement with it is valued, not merely tolerated. Dedicated time for learning and experimentation is one of the most effective investments a business can make.

Recognition and visibility for successful AI innovations creates social proof and momentum. When employees see their colleagues being recognised for finding smart ways to use AI, they're more likely to engage. This is the AI equivalent of the "bright spots" approach to change management — identifying and amplifying what's already working.

What is an AI Champion program and how does it work?

An AI Champion programme identifies and develops a network of employees who serve as the primary conduit for AI learning and adoption within their teams. Champions are typically selected based on genuine enthusiasm and peer credibility rather than hierarchy — someone their colleagues trust and turn to for advice.

Champions receive additional training that goes beyond the general workforce programme — deeper tool knowledge, facilitation skills, and an understanding of how to help colleagues work through challenges. They then operate as embedded resources within their teams: answering questions, sharing useful tips and use cases, facilitating peer learning, and providing feedback to the central AI adoption team about what's working and what isn't.

The leverage effect of a Champion programme is significant. A single well-trained Champion supporting a team of 15 people effectively multiplies the reach of the formal training investment many times over. Champions also build the kind of informal social proof that formal programmes can't replicate: "I saw Sarah using this to cut her monthly report from a half day to an hour" is more persuasive than any official communication.

Frequently Asked Questions

How do you prevent employee-led AI from becoming ungoverned shadow AI? The key is that employee-led adoption operates within a defined governance framework, not outside it. This means having a clear AI policy that defines approved tools and data use, a visible process for employees to propose and try new tools within sanctioned parameters, and a Champion network that reinforces governance norms alongside promoting adoption. Employee-led and well-governed are not in conflict — they require a framework that enables both.

Which employees make the best AI Champions? The best Champions are enthusiastic about AI (intrinsically, not just because they were asked), respected by their peers for competence rather than just hierarchy, communicative and patient, and genuinely interested in helping others rather than showcasing their own knowledge. Technical background helps but isn't essential — some of the most effective Champions are deeply functional users of AI tools with no formal technology background.

How do you measure the success of employee-led AI adoption? The primary metrics are adoption rate (what percentage of employees are actively using AI tools in their work), capability improvement (assessed before and after), and business impact (time saved, quality improvements, process efficiency gains). Secondary metrics include Champion programme engagement, training completion rates, and sentiment scores from regular pulse surveys. The combination of behavioural metrics (what people are actually doing) and outcome metrics (what it's delivering for the business) gives the fullest picture.

Not sure where your business stands with AI?

Find out your AiDOPTION Score — a free 10-minute diagnostic that measures your AI readiness across Strategy, Technology, and People. You'll get a personalised score and practical recommendations.

How to Measure AI ROI: What to Track and What to Ignore

AI investment is increasing across most mid-sized businesses. So is the pressure to demonstrate that it's working. But measuring the return on AI investment is harder than measuring most technology investments — and the most natural approach (applying traditional ROI frameworks directly) often produces misleading results that either undervalue what AI is delivering or, worse, justify continued investment in initiatives that aren't actually generating value.

AI investment is increasing across most mid-sized businesses. So is the pressure to demonstrate that it's working. But measuring the return on AI investment is harder than measuring most technology investments — and the most natural approach (applying traditional ROI frameworks directly) often produces misleading results that either undervalue what AI is delivering or, worse, justify continued investment in initiatives that aren't actually generating value.

Understanding how to measure AI ROI properly is increasingly important for business leaders who need to make resource allocation decisions and justify AI investment to boards and leadership teams.

Why is measuring AI ROI so difficult?

Three structural features of AI adoption make measurement more complex than traditional technology investment.

First, AI benefits are often diffuse. A CRM with AI-powered lead scoring doesn't generate a line item in your accounts. It improves the conversion rate of a process that involves multiple people, tools, and inputs. Attributing a specific financial outcome to the AI component specifically — rather than to the sales team, the product, the pricing, or other variables — requires careful measurement design.

Second, AI value accrues over time in ways that don't show up in short-term measurement windows. An organisation that invests in AI capability in year one may not see the full financial impact until year two or three, as the capability matures, skills develop, and AI is integrated more deeply into core processes. Applying a 90-day ROI lens to a multi-year capability investment will always produce disappointing results.

Third, some of the most significant AI value is in things that are hard to quantify: decision quality, employee satisfaction, risk reduction, and competitive positioning. These matter to the business but don't show up cleanly in a standard ROI calculation.

What are the right metrics for AI adoption success?

Effective AI measurement uses a layered framework that tracks metrics at three levels: adoption, performance, and business impact.

Adoption metrics measure whether AI is actually being used: active user rates, tool engagement, feature utilisation, and training completion. These are leading indicators — low adoption tells you early that value realisation is at risk, before it shows up in business metrics. Gartner research suggests that tracking adoption within the first 90 days of deployment is one of the strongest predictors of long-term AI programme success.

Performance metrics measure whether AI is doing what it's supposed to do: accuracy rates, error rates, processing times, output quality scores. These vary by use case but should be defined specifically for each AI application. A content generation tool should have quality metrics; a predictive analytics tool should have accuracy metrics; a process automation should have throughput and error rate metrics.

Business impact metrics measure what the AI investment is delivering at the commercial level: revenue impact, cost reduction, time savings converted to capacity, customer satisfaction improvements, and risk reduction. These are the metrics that matter most to leadership and boards, and they require the most careful measurement design to attribute correctly.

How do you build an AI measurement framework?

The most common mistake is trying to measure everything. An effective AI measurement framework focuses on the metrics that matter most for each specific initiative, measured consistently over a meaningful time period.

The framework should be designed before the AI initiative launches, not retrofitted afterwards. This means: defining the specific business outcome the AI investment is intended to deliver; identifying the metrics that will indicate whether that outcome is being achieved; establishing baseline measurements before the AI goes live; defining the measurement cadence (how often, who is responsible, how results are reported); and agreeing on the performance threshold that constitutes success.

For most mid-sized businesses, a simple dashboard tracking 4–6 key metrics per major AI initiative is more useful than a comprehensive measurement system. The goal is decision-useful data — information that tells leadership whether an AI investment is performing as expected and what, if anything, needs to change.

What does good AI ROI look like for a mid-sized business?

Based on research from McKinsey and Deloitte, mid-sized businesses that execute AI adoption well can typically expect measurable productivity improvements of 15–40% in targeted processes within 12–18 months, cost reductions of 10–30% in automated functions, and revenue impact through improved conversion rates, customer retention, or new capability in the range of 5–15%. These ranges are wide because the specific outcome depends heavily on the use case, the quality of the data, and the effectiveness of the adoption programme.

The single most important predictor of AI ROI isn't the technology chosen — it's the completeness of the adoption approach. Businesses that invest in strategy, technology, and people in combination consistently outperform those that invest in technology alone. This is consistently the most important finding in the research on AI adoption outcomes, and it's the experience we see in practice.

Measurement is itself part of a complete adoption approach. Businesses that measure rigorously, learn from what the data tells them, and adjust their approach accordingly are the ones that turn initial AI investments into compounding advantage over time.

Frequently Asked Questions

When should we start measuring AI ROI? Measurement should start before the AI initiative launches, with baseline data. Adoption metrics should be tracked from day one of go-live. Performance metrics typically take 4–8 weeks to stabilise as users become proficient and the AI system learns from live data. Business impact metrics should be assessed at 3, 6, and 12 months, with the expectation that the 12-month data will be the most meaningful.

What's a realistic timeframe for positive AI ROI? For well-executed initiatives targeting clear productivity or cost outcomes, positive ROI within 6–12 months is achievable. For more complex capability-building programmes, 12–18 months is more realistic. The key variable is whether the investment was scoped correctly in the first place — AI initiatives with vague or over-broad objectives consistently take longer to deliver measurable returns than those with specific, well-defined success criteria.

Who should own AI measurement in the business? AI measurement is most effective when owned jointly by the business leader of the function where the AI is deployed and the team managing the AI adoption programme. The function leader owns the business impact metrics (they're accountable for the commercial outcome); the adoption team owns the adoption and performance metrics. Where these two groups review data together regularly, the feedback loop between technical performance and business outcome is much tighter, and course-correction happens faster.

Not sure where your business stands with AI?

Find out your AiDOPTION Score — a free 10-minute diagnostic that measures your AI readiness across Strategy, Technology, and People. You'll get a personalised score and practical recommendations.

The AI Pilot Trap: Why Your Experiments Aren't Turning Into Results

Many mid-sized businesses have been running AI experiments for months — sometimes years. A chatbot here, an automation there, a team using an AI writing tool. Some of these pilots have been genuinely impressive. But they haven't turned into business results at scale. The business is still waiting for the breakthrough moment.

Many mid-sized businesses have been running AI experiments for months — sometimes years. A chatbot here, an automation there, a team using an AI writing tool. Some of these pilots have been genuinely impressive. But they haven't turned into business results at scale. The business is still waiting for the breakthrough moment.

This is the AI pilot trap. And it's one of the most common patterns we see in organisations that are trying to adopt AI seriously but aren't getting the commercial outcomes they were promised.

What is the AI pilot trap?

The AI pilot trap is the state where an organisation has multiple AI experiments running simultaneously — some of which show genuine promise — but none of them have scaled into standard operating practice, and the aggregate impact on the business is negligible.

It's characterised by a few telltale signs: a growing list of "proof of concept" projects that never graduate to production; a small group of enthusiastic early adopters surrounded by a much larger group of people who haven't engaged with AI at all; positive pilot reports that don't translate into measurable business metrics; and increasing frustration at the leadership level that AI investment isn't delivering returns.

According to BCG research, roughly 70% of companies have launched AI pilots, but fewer than 30% have successfully scaled their AI initiatives across the business. The pilot trap is the norm, not the exception.

Why do AI pilots succeed but fail to scale?

There are several structural reasons why pilots work in isolation but stall at scale, and understanding them is the first step to breaking out of the trap.

First, pilots are typically run by the most motivated people in the organisation — early adopters who are intrinsically interested in AI and willing to invest personal time to make it work. These people can make almost anything succeed in a controlled environment. Scaling requires getting less motivated, less technical people to the same level of capability. That's a fundamentally different challenge.

Second, pilots rarely surface the integration challenges that come at scale. A standalone AI tool can run on its own data without touching existing systems. When you try to embed that same capability into actual business workflows, you hit data quality problems, integration complexity, and security requirements that simply don't exist in a sandboxed experiment.

Third, pilots are rarely designed with scaling in mind. Success metrics are usually about the technology working, not about business impact. When leadership asks "what did we get for this?", there's often no clear answer — because the pilot wasn't designed to measure business outcomes.

How do you break out of the AI pilot trap?

Breaking out of the pilot trap requires a fundamental shift in how you think about AI adoption — from experimentation to strategy.

The first step is to audit your current pilots honestly. What are you running? What did it cost? What business outcome was it designed to deliver? What's the measured result? Many organisations have never done this rigorously, and when they do, they find that most of their AI investment is dispersed across low-value experiments rather than concentrated on high-value outcomes.

The second step is to define clear AI priorities at the business level. Not "we want to use AI" but "we want AI to reduce our customer service response time by 40% in the next 12 months." Specificity forces you to choose, allocate resources, and measure outcomes. Without it, you end up spreading effort across everything and achieving impact in nothing.

The third step is to build a path from pilot to production. This means defining, before you start, what success looks like, what scale looks like, and what the steps are between a working prototype and a live business capability. Governance, integration, training, and change management all need to be planned before the pilot starts, not bolted on afterwards.

What's the difference between an AI experiment and an AI strategy?

An AI experiment is a test of whether a technology can do something. An AI strategy is a plan for using technology to achieve specific business outcomes. The difference sounds simple, but in practice it's significant.

An experiment asks: "Can AI do this?" A strategy asks: "What should AI do to make our business better, by how much, and how will we make that happen?" The strategy has a target, a timeline, a resource allocation, a measurement framework, and an owner. The experiment has a hypothesis and a sandbox.

Most businesses in the AI pilot trap have lots of experiments and no strategy. The path out is to stop asking whether AI can do things and start asking what you actually want it to deliver — then build backward from that answer.

Frequently Asked Questions

How many AI pilots is too many? There's no magic number, but if your organisation is running more pilots than it can actively monitor, resource, and assess — you have too many. Three to five focused pilots with clear success criteria is generally far more productive than fifteen loosely managed experiments. Fewer, better-designed pilots lead to faster, more credible learnings.

When should we stop piloting and start scaling? A pilot is ready to scale when it has demonstrated measurable business impact (not just technical success), has a clear integration path into existing systems and workflows, and has an owner who is accountable for the scaled outcome. If you can't answer all three, the pilot isn't ready — regardless of how impressive it looks in a demo.

What does a successful pilot-to-production path look like? It starts with a clear definition of "done" at the pilot stage — specific metrics, not general impressions. Then it moves through a structured scale-up: technical integration, data governance, user training, change management, and a phased rollout with checkpoints. The key is treating the transition from pilot to production as its own project, with its own timeline and resources — not an afterthought.

Not sure where your business stands with AI?

Find out your AiDOPTION Score — a free 10-minute diagnostic that measures your AI readiness across Strategy, Technology, and People. You'll get a personalised score and practical recommendations.

How to Talk to Your Team About AI Without Creating Fear or Resistance

The way you communicate about AI inside your organisation may matter more than any technology decision you make. Get the communication right and you create an environment where people are curious, engaged, and willing to learn. Get it wrong and you generate the kind of sustained, quiet resistance that can undermine even the most well-resourced AI adoption programme.

The way you communicate about AI inside your organisation may matter more than any technology decision you make. Get the communication right and you create an environment where people are curious, engaged, and willing to learn. Get it wrong, and you generate the kind of sustained, quiet resistance that can undermine even the most well-resourced AI adoption programme.

Internal AI communication is an area where the stakes are high, and the guidance is limited. Most of what's written about AI communication focuses on external messaging — customer-facing communication, PR. Internal communication is comparatively neglected, and the consequences of that show up in failed adoptions.

Why do employees resist AI adoption?

The most honest answer is: because they're not sure what it means for them. And in the absence of clear information, people fill the gap with the most available narrative — and the most available narrative about AI is overwhelmingly one of job displacement.

Research from Edelman's 2024 Trust Barometer found that 58% of workers are worried about AI's impact on their jobs. That anxiety doesn't disappear when a business starts adopting AI — it intensifies, particularly when the communication is unclear or absent. Employees who feel uncertain about their future are less willing to invest in learning new tools, less likely to engage positively with adoption programmes, and more likely to become passive resistance that slow down implementation.

Resistance is rarely dramatic. It shows up as: low engagement with training programmes, minimal use of new tools, passive non-compliance, and an undercurrent of negative conversation that spreads through informal networks faster than any official communication can counter it.

What should your internal AI communication cover?

Effective internal AI communication addresses four core questions that employees — consciously or not — are asking.

First: Why? Why is the business adopting AI? What problem does it solve? What opportunity does it create? Communication that leads with business rationale, explained plainly, gives employees context that makes everything that follows more meaningful.

Second: What? What specifically is changing? Which tools? Which processes? Which teams? Vague communication about "AI transformation" generates anxiety. Specific communication about "we're introducing an AI assistant to help the sales team with proposal drafting" is manageable and concrete.

Third: What does it mean for me? This is the question employees most want answered and the one most often left unanswered in internal communications. Directly addressing the job security question — honestly, not with empty reassurance — builds more trust than avoiding it.

Fourth: What do I need to do? Clear, specific guidance about what employees are expected to do in response to the change reduces uncertainty and provides a sense of agency. Uncertainty is stressful. Knowing what's expected and how to get there is manageable.

How do you time AI communications effectively?

Timing is one of the most underrated elements of change communication. Gartner research on technology adoption highlights a consistent pattern: communications that arrive too early (before people can take any action) generate anxiety; communications that arrive too late (after changes are already visible) generate distrust.

The right approach is sequenced communication that runs ahead of but in parallel with the adoption programme. Initial communications should come from senior leadership, explain the strategic rationale, and acknowledge the change that's coming without overwhelming people with detail. Middle-stage communications should become more specific as the adoption gets closer — what tools, what processes, what support is available. Launch communications should be practical and action-oriented — here's what you need to do, here's where to get help.

Post-launch communication is often forgotten but critically important. The period immediately after go-live is when most user uncertainty peaks, when confusion is highest, and when the risk of disengagement is greatest. Proactive, supportive communication in this period — acknowledging the challenges, celebrating early wins, directing people to help resources — makes a significant difference to adoption rates.

What communication mistakes cause the most damage during AI adoption?

Four mistakes consistently appear in failed AI adoption programmes.

Silence. The absence of communication doesn't reduce anxiety — it amplifies it. In the absence of official communication, informal networks fill the gap with speculation and rumour.

Overselling. Communications that promise transformational outcomes without acknowledging the effort and change required erode trust quickly when reality doesn't match the messaging.

One-way communication. Employees who feel heard are far more receptive to change than those who receive only broadcast messages. Building in feedback mechanisms — surveys, forums, manager conversations — is as important as the outbound communication.

Inconsistency. When leaders say different things, or when the official message doesn't match what employees see happening, credibility collapses. Ensuring consistency across senior leadership before any communication goes out is non-negotiable.

Frequently Asked Questions

Should leaders communicate about AI before or after the strategy is finalised? Some communication should happen before strategy is finalised — specifically to acknowledge that AI is being evaluated and that employees will be kept informed. The risk of waiting for a complete strategy is that employees hear rumours and fill the gap themselves. An early, honest "we're working on this and we'll keep you informed" is far better than silence followed by a fully formed announcement.

How do you address job loss fears specifically? Directly and honestly. Vague reassurance ("AI won't replace jobs") isn't believed and often backfires. A more effective approach is to be specific about the roles and tasks that will and won't be affected, to explain what the business is investing in to support people through the transition, and to acknowledge that some roles will change significantly. Acknowledging reality while demonstrating genuine commitment to employee support is far more trust-building than unconvincing blanket reassurance.

What format works best for AI communications — all-hands, email, or one-on-ones? All three, at different stages. All-hands or town hall formats work well for initial strategic announcements — they signal that this is important and give everyone the same information at the same time. Written communications (email, intranet) work well for detailed, reference-quality information that people can return to. Manager-to-team conversations are most effective for addressing individual concerns and questions — no one feels comfortable asking "will I lose my job?" in a company-wide forum. A multi-channel approach, sequenced appropriately, is almost always more effective than relying on a single format.

Not sure where your business stands with AI?

Find out your AiDOPTION Score — a free 10-minute diagnostic that measures your AI readiness across Strategy, Technology, and People. You'll get a personalised score and practical recommendations.

Shadow AI: The Hidden Risk Growing Inside Your Business Right Now

There's an AI adoption challenge most business leaders aren't aware of — not because it's new, but because by its very nature it's difficult to see. It's called shadow AI, and there's a very good chance it's already happening in your organisation.

There's an AI adoption challenge most business leaders aren't aware of — not because it's new, but because by its very nature it's difficult to see. It's called shadow AI, and there's a very good chance it's already happening in your organisation.

Understanding what it is, why it matters, and how to address it without destroying the productivity gains that come with it is one of the most important governance challenges for mid-sized businesses right now.

What is shadow AI?

Shadow AI refers to the use of AI tools by employees without the knowledge, approval, or governance oversight of the organisation. It's the direct equivalent of "shadow IT" — the unauthorised use of software and systems that organisations have dealt with for decades — but with a new layer of risk unique to AI.

In practice, shadow AI looks like employees using ChatGPT, Claude, Gemini, or other consumer AI tools to handle work tasks. Writing emails and reports. Summarising documents. Analysing data. Drafting proposals. These tools are powerful, accessible, and free or very cheap — so people use them, often without realising there's any issue with doing so.

According to Microsoft's 2024 Work Trend Index, 78% of AI users at work are bringing their own AI tools rather than using employer-provided ones. In other words, shadow AI isn't a fringe behaviour. It's mainstream.

Why is shadow AI a risk for mid-sized businesses?

The risks fall into three main categories: data security, compliance, and quality.

Data security is the most immediate concern. When employees paste company data — client information, financial data, internal strategies, HR records — into a consumer AI tool, that data is being sent to a third-party server. Depending on the tool's terms of service, it may be used to train future models. Even where it isn't, it's outside your control and potentially outside your data jurisdiction.

Compliance risk is significant for organisations in regulated industries. Healthcare, financial services, and professional services businesses often have strict obligations around data handling that consumer AI tools don't meet. Using these tools with sensitive data can trigger regulatory consequences that far outweigh any productivity benefit.

Quality risk is less obvious but real. AI tools confidently produce incorrect information. Without organisational guardrails, employees may act on AI-generated content that is factually wrong, legally risky, or strategically misaligned — without realising it.

How do you find out if shadow AI is happening in your organisation?

The honest answer is: assume it's happening, and verify. Waiting for it to become visible — usually through a data incident or compliance issue — is the worst possible approach.

Practical discovery methods include: running an anonymous staff survey asking about AI tool usage (framed positively, not as a compliance audit); reviewing your network traffic logs for connections to known AI tool domains; and having direct, open conversations with team leaders about what their teams are using.

The goal of discovery is understanding, not punishment. Most shadow AI usage is the result of motivated employees trying to do their jobs better with the best tools available. The response should be a governance framework that channels that motivation productively, not a crackdown that drives the behaviour further underground.

How do you govern AI without killing productivity?

The answer is to replace prohibition with policy. Banning AI tools rarely works — people find workarounds, and you lose the productivity benefits that come with legitimate use. What works is a clear, practical AI policy that tells people what they can use, what they can't, what data they can and can't share with AI tools, and what to do when they're unsure.

An effective AI governance framework for a mid-sized business covers four areas. First, approved tools — a defined list of AI tools that have been security-assessed and are sanctioned for use, with clear guidance on which tools are appropriate for which types of work. Second, data classification — a simple framework that tells employees what type of information can be used with external AI tools and what must stay in controlled environments. Third, an approval process for new tools — a lightweight mechanism that lets employees request assessment of new AI tools rather than just bypassing approval. And fourth, regular review — AI tools and capabilities change rapidly, so the policy needs to be a living document, not a one-time exercise.

The goal is to make the compliant path the easy path. If using approved AI tools is simple, well-supported, and clearly more capable than consumer alternatives, most employees will choose it without being told to.

Frequently Asked Questions

Is using ChatGPT at work considered shadow AI? It depends on your organisation's policies. If your business has no AI policy and employees are using ChatGPT for work tasks, that's technically shadow AI — unsanctioned use of an external tool. Whether it's a risk depends on what data they're sharing with it. Using ChatGPT to draft a generic email is very different from using it to summarise a confidential client document.

What data is most at risk from shadow AI? The highest-risk categories are: personally identifiable information (PII), client or customer data, financial data, internal strategic plans, legal documents, and HR records. Any data that you would restrict access to internally should also be restricted from use in external AI tools.

How do we create an AI policy that staff will actually follow? The most effective AI policies are short, clear, and explain the "why" behind the rules. They distinguish between different scenarios with concrete examples, rather than speaking only in abstractions. And they provide a clear process for employees to ask questions or request approval for new tools, so the policy feels like guidance rather than a barrier. Policies created with input from staff are also significantly more likely to be followed than ones handed down from leadership.

Not sure where your business stands with AI?

Find out your AiDOPTION Score — a free 10-minute diagnostic that measures your AI readiness across Strategy, Technology, and People. You'll get a personalised score and practical recommendations.

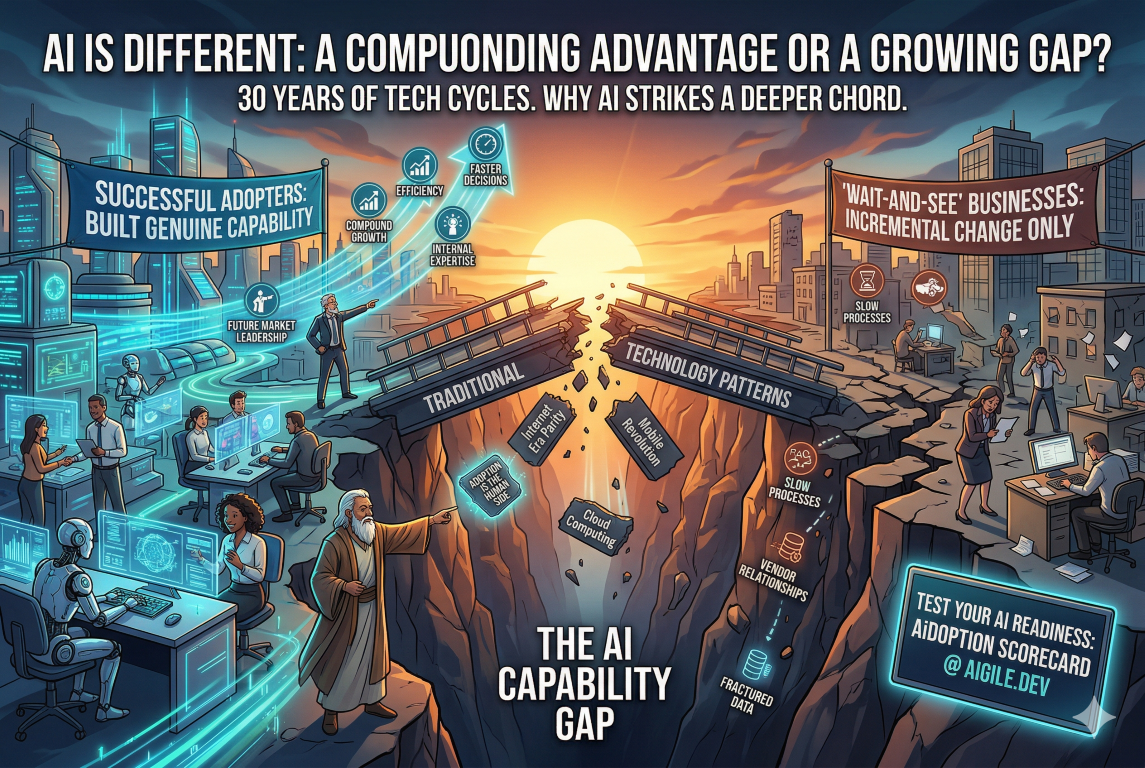

30 Years in Technology Taught Me This About AI: It's Different This Time

I've been working in technology since the early 1990s. I've lived through the commercialisation of the internet, the dot-com boom and bust, the arrival of mobile, the rise of cloud computing, social media, and a dozen other waves that were each described, at the time, as the most transformative technology shift in a generation.

I've been working in technology since the early 1990s. I've lived through the commercialisation of the internet, the dot-com boom and bust, the arrival of mobile, the rise of cloud computing, social media, and a dozen other waves that were each described, at the time, as the most transformative technology shift in a generation.

So when I tell you that AI is different — genuinely different, in ways that make most of what we've experienced before look like incremental change — I want you to understand that it's not something I say lightly. And I want to explain why, based on three decades of watching technology cycles, I believe that.

Why does every technology cycle feel different — and why this one actually is?

Every major technology wave comes with a version of the same claim: this changes everything. And in most cases, the claim is both true and overstated. The internet did change everything. It also took fifteen years to fully manifest in mainstream business practice, and the "everything" it changed was more narrowly defined than the early evangelists suggested.

The pattern I've observed across multiple cycles is consistent: initial excitement, early adoption by the technically bold, a reality check when the hard implementation challenges emerge, gradual maturation, and eventually mainstream adoption that is genuinely transformative but slower and less dramatic than the peak of the hype cycle suggested.

AI is following this pattern. But there are three things about this wave that make it substantively different from everything I've worked with before, and they have direct implications for how mid-sized businesses should be thinking about their response.

What have 30 years of technology change taught me about adoption?

The most consistent lesson from 30 years of digital product development is that technology succeeds when it solves real problems for real people and fails when it's deployed for its own sake. This sounds obvious, but it's violated constantly.

I've seen large organisations spend years and significant capital on technology implementations that never delivered meaningful business outcomes because the adoption question was never properly answered. The technology worked. The people didn't change. And a technology that nobody uses delivers no value regardless of its capability.

The second consistent lesson is that the human side of technology adoption is always the harder problem. Technical implementation, while complex, is typically more predictable than people change. The businesses that succeed with technology consistently invest disproportionately in change management, training, and culture — not just infrastructure and software.

The third lesson is that the organisations that build genuine capability — rather than buying a solution and assuming it will work — compound their advantage over time. The businesses I've seen succeed with every major technology wave are those that developed internal expertise, not just vendor relationships.

How is AI different from every previous technology wave?

Three things distinguish this wave from what came before.

First, the breadth of application is unprecedented. Previous technology waves had wide but ultimately bounded impacts. The internet transformed information exchange and commerce. Mobile transformed communication and location-based services. AI has the potential to augment almost every cognitive task performed in almost every business function. The scope is genuinely different.

Second, the pace of capability development is accelerating in a way that previous technology waves didn't. The internet developed quickly. But the capability curve for AI — the rate at which systems are becoming more capable — is steeper and shows fewer signs of plateauing. Businesses are in a position where the landscape is changing under their feet in real time, not just at product launch cycles.

Third, AI is for the first time creating a meaningful capability gap between businesses that adopt it well and those that don't at the level of everyday operational work. Previous technology waves largely created parity — when everyone has a website, having a website doesn't differentiate you. AI, done well, builds into a compounding advantage: better processes, better decisions, better products, delivered faster, at lower cost. That gap, once established, is hard to close.

What does this mean for business leaders today?

It means the strategic response can't be "wait and see." The businesses that wait for AI to mature before engaging will find themselves behind a curve that's already steep and getting steeper.

It also doesn't mean running at every AI opportunity simultaneously. The businesses I've seen succeed with major technology transitions are those that make deliberate, strategic choices about where to focus, build deep capability in those areas, and then expand from a position of genuine competence rather than scattered experimentation.

The question isn't whether to adopt AI. It's how to do it in a way that builds lasting capability — in your strategy, your technology, and most importantly, your people. Those three things together are what turn AI investment into business outcomes that actually show up in your results.

That's why I founded AiGILE. Not to sell technology, but to help businesses build the genuine AI capability that I've seen make the difference, over and over, for thirty years.

Frequently Asked Questions

Is AI really different from previous automation waves? Yes, in a meaningful way. Previous automation waves primarily replaced repetitive physical tasks (manufacturing) or highly structured cognitive tasks (data processing). AI can augment and in some cases replace judgment-intensive, unstructured cognitive work — writing, analysis, design, strategy support, and customer interaction. The scope of what can be affected is substantially broader than previous automation.

How long will it take for AI to fully transform most businesses? Based on historical technology adoption patterns, mainstream transformation of business operations will take 7–15 years. But the leading adopters will build substantial advantages within 2–5 years that will be very difficult for laggards to close. The question isn't about the end state — it's about where you want to be in the competitive landscape in three to five years.

What's the first thing a business leader should do about AI today? Understand where your business currently stands. Not with a gut feeling, but with a structured assessment across strategy, technology, and people dimensions. You can't make good decisions about where to invest without an honest baseline. The AiDOPTION Scorecard is designed to give you exactly that — a clear, specific picture of your current AI readiness and where the priority gaps are.

Not sure where your business stands with AI?

Find out your AiDOPTION Score — a free 10-minute diagnostic that measures your AI readiness across Strategy, Technology, and People. You'll get a personalised score and practical recommendations.

The AI Skills Gap Is Here. Is Your Team Ready?

Every significant technology transition creates a skills gap. The internet created demand for web developers, digital marketers, and e-commerce specialists that didn't exist a decade earlier. Mobile created demand for app developers and UX designers. AI is creating its own wave of new skill requirements — and unlike previous transitions, it's affecting almost every role across almost every function.

Every significant technology transition creates a skills gap. The internet created demand for web developers, digital marketers, and e-commerce specialists that didn't exist a decade earlier. Mobile created demand for app developers and UX designers. AI is creating its own wave of new skill requirements — and unlike previous transitions, it's affecting almost every role across almost every function.

For mid-sized businesses, the AI skills gap represents both a risk and an opportunity. The risk is being outpaced by competitors who invest in building AI capability earlier and more systematically. The opportunity is that businesses that invest in their people's AI skills now will compound that advantage over time.

What is the AI skills gap and why does it matter now?

The AI skills gap is the difference between the skills employees currently have and the skills they need to work effectively in an AI-enabled environment. It's not primarily about knowing how to build AI systems — that's a technical specialisation. It's about knowing how to use AI tools effectively in everyday work, understanding AI's capabilities and limitations, knowing how to evaluate AI outputs critically, and being able to adapt workflows to take advantage of AI assistance.

Research from McKinsey and BCG consistently shows that the biggest barrier to AI adoption isn't the technology — it's the people. A 2024 BCG survey found that more than 80% of organisations identified employee capability as one of their top two barriers to scaling AI. The technology is advancing faster than the workforce's ability to use it effectively.

The urgency is real. Businesses that wait for the AI skills question to resolve itself — hoping employees will pick up what they need organically — are accumulating a gap that becomes progressively harder to close.

What skills do employees actually need for AI?

AI skills fall into three levels, and not all employees need all three.

Foundational AI literacy applies to everyone. This means understanding what AI can and can't do, knowing which tools are available and appropriate for which tasks, being able to spot when AI output needs checking, and understanding the basic principles of responsible AI use (privacy, accuracy, attribution). This level of skill should be universal across the organisation.

Functional AI proficiency applies to anyone whose role involves significant use of AI tools. This means being able to write effective prompts to get high-quality outputs, use AI tools efficiently as part of day-to-day workflows, critically evaluate and edit AI-generated content, and apply AI appropriately to the specific demands of their function.

Advanced AI capability applies to a smaller group of people — analysts, developers, and process owners — who need to configure AI tools, design AI-enabled workflows, or work with AI in technically complex ways. This level typically requires more formal training and often a technical background.

How do you assess your team's current AI capability?

The starting point is an honest, structured capability assessment. This isn't just a survey asking whether people have used AI tools — it needs to assess actual proficiency across the dimensions that matter for your business.

A basic capability assessment covers: current AI tool usage (what, how often, for what purpose); self-assessed confidence in using AI effectively; understanding of AI's limitations and responsible use principles; and function-specific skill requirements. This should be done at the team level, not just individually, because the unit of deployment is usually a team workflow rather than an individual task.

The assessment should produce a clear picture of where your workforce currently sits across the three levels, where the priority gaps are relative to your AI strategy, and which teams or functions need the most investment to close those gaps.

What's the best approach to building AI skills in your workforce?

The single biggest mistake organisations make with AI skill-building is treating it as a one-off training exercise. A single workshop or e-learning module doesn't build lasting capability. Skill development that actually changes how people work requires a sustained, multi-modal approach.

Effective AI capability-building combines formal learning (structured training on specific tools and principles) with experiential learning (applying AI in real work tasks with coaching support), peer learning (AI champions and communities of practice within the organisation), and self-directed learning (access to resources for ongoing development). The mix should be designed around the specific needs and learning preferences of different groups, not as a one-size approach.

Critically, skill-building should be connected to the specific AI tools and workflows the organisation is adopting — not generic AI content. Learning is most effective when it's immediately applicable to real work. Employees who understand why they're learning something, and can use it the next day, develop skills far faster than those learning in the abstract.

Frequently Asked Questions

Do all employees need AI skills or just specific teams? At the foundational level — basic AI literacy and responsible use principles — yes, this should be universal. AI is increasingly present in the tools most employees use every day, whether or not those employees are consciously aware of it. Functional and advanced skills can be targeted to the roles and teams where they'll have the most impact. A phased approach, starting with high-impact functions and expanding over time, is practical for most mid-sized businesses.

Is "training" enough to close the AI skills gap? Training alone is not enough. Research consistently shows that skills learned in isolation don't transfer reliably to real-world application without reinforcement. Effective capability-building requires training, practice plus support. This is why we at AiGILE use the term "learning" rather than "training" — it signals a broader approach that goes beyond a one-off programme to build genuine, lasting capability.

How long does it take to build meaningful AI capability in a team? For foundational AI literacy, a well-designed programme can produce meaningful improvement in 4–6 weeks. For functional proficiency with specific tools, typically 8–12 weeks of supported practice is required to reach reliable competence. Advanced capability development is more variable, but typically 3–6 months of structured learning and application. The key variable is whether learning is connected to real work — theoretical learning alone takes longer and sticks less well.

Not sure where your business stands with AI?

Find out your AiDOPTION Score — a free 10-minute diagnostic that measures your AI readiness across Strategy, Technology, and People. You'll get a personalised score and practical recommendations.

Build, Buy, or Augment? How to Make the Right AI Technology Decision

Most AI adoption conversations start with technology. Which platform? Which tools? How much compute? It's understandable — the technology is genuinely exciting, and it's the most visible part of the process. But it's rarely where AI adoption actually breaks down.

One of the most consequential decisions a mid-sized business makes on its AI journey is also one of the least discussed: should you build your own AI capabilities, buy off-the-shelf AI tools, or augment your existing systems with AI? Get this decision right and your AI investments compound over time. Get it wrong, and you can spend 12–18 months on the wrong path before realising it.

The good news is that this decision is more straightforward than it looks, once you understand the trade-offs clearly.

What are the three AI technology options for mid-sized businesses?

The "build, buy, or augment" framework covers the three fundamental approaches to acquiring AI capability:

Build means developing custom AI solutions specifically for your business. This could mean training your own models, building custom AI-powered applications, or developing bespoke automations tailored to your exact workflows and data.

Buy means purchasing off-the-shelf AI products — tools, platforms, or SaaS solutions that come with AI capabilities built in. This category now includes most major business software: CRMs with AI features, analytics platforms with predictive capabilities, and dedicated AI tools for specific functions.

Augment means adding AI capabilities to your existing systems — integrating AI APIs into current platforms, layering AI assistants onto existing workflows, or connecting existing tools to AI services without replacing those tools.

The optimal strategy for most mid-sized businesses involves all three, applied to different parts of the business based on where each approach creates the most value.

When should you build custom AI?

Custom AI is the right choice when your competitive advantage depends on a capability that doesn't exist in off-the-shelf products — and where that capability relies on proprietary data or processes that are unique to your business.

The test is straightforward: if your competitors could buy the same tool and get the same outcome, buying is almost always more efficient. Custom AI makes sense when the specific way you do something — the data you have, the workflow you've developed, the domain knowledge you've accumulated — is itself the competitive asset.

The second consideration is scale. Custom AI development carries upfront cost and ongoing maintenance overhead. Unless the value of the outcome is substantial and sustainable, buying or augmenting will typically deliver better ROI. A good rule of thumb: if you can't articulate a specific, measurable business outcome that justifies the development cost within 12–18 months, it's probably not a build decision.

When should you buy off-the-shelf AI tools?

Buying is the right choice for capabilities that are genuinely commoditised — where the business need is standard and the market has already produced good solutions. AI tools for document summarisation, meeting transcription, customer service chatbots, and marketing content generation have become widely available, affordable, and capable. Building these from scratch would be a poor use of resources.

The hidden risk of buying is vendor dependency and cost creep. Many AI tools start with attractive pricing models that change significantly at scale, or as vendors add features. Due diligence should cover: total cost of ownership over three years (not just the subscription price), data portability (can you get your data out if you switch?), and integration compatibility with your existing systems.

The other buying risk is that off-the-shelf tools are designed for general use cases. They may not fit your specific workflow closely enough to deliver the productivity gains promised. Always pilot before committing to a significant tool purchase, and define the success criteria before the pilot starts.

What does "augment" mean in an AI technology context?

Augmentation is the most underappreciated option in the framework — and often the fastest path to value for mid-sized businesses. Rather than replacing existing systems (which is expensive and disruptive) or building new ones from scratch (which takes time and expertise), augmentation means adding AI capability to what you already have.

Practical examples include: connecting your CRM to an AI service that scores leads and recommends next actions, without replacing the CRM itself; adding an AI layer to your customer support platform that suggests responses to agents based on previous successful tickets; or integrating a document AI tool into your contract management process that flags clauses and surfaces relevant precedents.

The advantage of augmentation is that it works with your existing data, workflows, and systems rather than against them. The implementation risk is lower, the change management challenge is more contained, and the path to productivity gain is faster. For most mid-sized businesses, augmentation should be the default starting point before more significant build or buy decisions are made.

Frequently Asked Questions

How long does it take to build a custom AI? It varies significantly based on complexity, but a realistic timeline for a production-ready custom AI capability is 3–9 months from discovery to deployment, with ongoing iteration thereafter. Projects that try to compress this timeline significantly typically end up with technical debt that costs more to fix later. The build decision should only be made when the business case clearly justifies this timeline.

What are the hidden costs of off-the-shelf AI tools? The most common hidden costs are: per-seat pricing that escalates as adoption grows; data migration and integration costs that aren't included in the subscription; staff training time; ongoing management overhead; and eventual switching costs when the tool no longer meets your needs. Factor all of these into your cost comparison, not just the headline subscription price.

Can we start with buying and move to building later? Absolutely — and this is often the recommended path. Buying a capable off-the-shelf tool lets you understand the problem space, develop internal expertise, and generate data that might eventually support a custom build. Starting with buy also means you can generate business value while the custom capability is being developed, rather than waiting 6+ months before seeing any return.

Not sure where your business stands with AI?

Find out your AiDOPTION Score — a free 10-minute diagnostic that measures your AI readiness across Strategy, Technology, and People. You'll get a personalised score and practical recommendations.

Why Most AI Adoption Fails: The Change Management Problem Nobody Talks About

Most AI adoption conversations start with technology. Which platform? Which tools? How much compute? It's understandable — the technology is genuinely exciting, and it's the most visible part of the process. But it's rarely where AI adoption actually breaks down.