The AiGILE Blog

Browse our latest articles

Is Your Data Ready for AI? The Foundation Most Businesses Skip

There's a phrase that gets used a lot in enterprise technology: "garbage in, garbage out." It predates AI by several decades. But it's never been more relevant than it is right now, because the quality of AI output is directly — and in many cases brutally — dependent on the quality of the data you're working with.

There's a phrase that gets used a lot in enterprise technology: "garbage in, garbage out." It predates AI by several decades. But it's never been more relevant than it is right now, because the quality of AI output is directly — and in many cases brutally — dependent on the quality of the data you're working with.

Most mid-sized businesses underestimate their data readiness challenges before starting AI initiatives. They invest in tools, discover the data problem mid-project, and either scale back their ambitions or spend significant additional resources fixing issues that could have been identified up front.

Why does data readiness matter before adopting AI?

AI systems — whether you're using a commercial platform or building custom capabilities — learn from data and operate on data. If that data is incomplete, inconsistent, poorly structured, or inaccessible, the AI's outputs will reflect those problems. A customer segmentation model trained on incomplete CRM data will produce unreliable segments. A predictive demand-planning tool fed inconsistent historical sales data will produce unreliable forecasts.

The cost of discovering data problems mid-project is significant. MIT research suggests that poor data quality costs US businesses around $3.1 trillion per year in wasted resources and missed opportunities. At the project level, data quality issues discovered after an AI initiative has started are typically 3–5 times more expensive to resolve than if they had been addressed upfront.

Data readiness assessment isn't a bureaucratic hurdle on the path to AI adoption. It's a commercial decision that protects your investment in what comes next.

What does "data ready" actually mean?

Data readiness has four dimensions, and a business needs to be in reasonable shape across all of them before an AI initiative can be expected to succeed.

Availability means the data you need exists, is digitised, and is accessible to the systems that will use it. Data locked in spreadsheets on individual laptops, stored in legacy systems with no API access, or existing only on paper, isn't available for AI use, regardless of its quality.

Quality means the data is accurate, complete, and consistent. Duplicate records, missing values, inconsistent formats (dates stored as text, addresses in different formats), and outdated information all degrade AI performance.

Governance means there is clear ownership, defined standards, and documented processes for how data is created, maintained, and used. Without governance, data quality degrades over time regardless of how clean it starts. This is also where privacy, security, and compliance considerations live.

Volume means there is sufficient data for the AI application you're building or using. Some AI capabilities require substantial historical data to perform reliably. Understanding the minimum data requirements for a specific AI use case is an important part of the feasibility assessment.

How do you assess your current data quality?

A basic data readiness assessment can be conducted quickly with a structured approach. For each major data domain that your AI initiative will rely on — customer data, product data, operational data, financial data — you assess across the four dimensions: Is it available? Is it of adequate quality? Is it governed? Is there enough of it?

The most revealing questions to ask are often the simple ones: Who owns this data? When was it last audited? What percentage of records are complete? Can we export it in a standard format? How many systems does it live in? If these questions produce uncertain or inconsistent answers, that's a signal that the data foundation needs work before AI initiatives are built on top of it.

For mid-sized businesses, a focused data assessment of the core domains relevant to a specific AI initiative typically takes 2–4 weeks and produces a clear picture of what's ready to use, what needs remediation, and what represents a longer-term investment.

What's the fastest way to improve data readiness?

The fastest path is to narrow the scope. Rather than attempting to fix all data quality issues across the entire business before starting AI initiatives, identify the specific data domains required for your highest-priority AI use cases and focus remediation there.

For most mid-sized businesses, this means: establishing clear data ownership (a named person responsible for each critical data domain); running a targeted data quality cleanse on the records that the AI initiative will actually use; implementing basic data governance rules that prevent the same issues from recurring; and ensuring the data is accessible to the AI platform in the required format.

This focused approach is more pragmatic than a wholesale data transformation programme, and it generates value faster. It also yields insights into your data management practices that inform the broader investment in data governance over time.

Frequently Asked Questions

Do we need to fix all our data before we can start with AI? No, and attempting to do so would unnecessarily delay AI adoption. The goal is "fit for purpose" data, not perfect data. For each AI use case, define the minimum data quality threshold required for acceptable performance, assess whether your current data meets that threshold, and remediate the specific gaps. You can run AI initiatives in parallel with broader data quality improvement programmes.

What data quality score is "good enough" for AI? There's no universal answer — it depends on the use case and the consequences of errors. An AI tool summarising internal meeting notes can tolerate more data imperfection than an AI model used in clinical decision-making. The right question is: what's the minimum quality level where the AI's output is reliably useful, and is our data at or above that level? If you can't answer this for your specific use case, that's the first thing to establish.

Who is responsible for data readiness in a mid-sized business? In most mid-sized businesses, data readiness is a shared responsibility that sits awkwardly among IT (who manage the systems), operations (who generate the data), and whoever leads the AI initiative. This ambiguity is itself a governance problem. Best practice is to establish a named "data owner" for each critical domain — typically a senior operational person, not an IT person — who is accountable for the quality of data in that area.

Not sure where your business stands with AI?

Find out your AiDOPTION Score — a free 10-minute diagnostic that measures your AI readiness across Strategy, Technology, and People. You'll get a personalised score and practical recommendations.

30 Years in Technology Taught Me This About AI: It's Different This Time

I've been working in technology since the early 1990s. I've lived through the commercialisation of the internet, the dot-com boom and bust, the arrival of mobile, the rise of cloud computing, social media, and a dozen other waves that were each described, at the time, as the most transformative technology shift in a generation.

I've been working in technology since the early 1990s. I've lived through the commercialisation of the internet, the dot-com boom and bust, the arrival of mobile, the rise of cloud computing, social media, and a dozen other waves that were each described, at the time, as the most transformative technology shift in a generation.

So when I tell you that AI is different — genuinely different, in ways that make most of what we've experienced before look like incremental change — I want you to understand that it's not something I say lightly. And I want to explain why, based on three decades of watching technology cycles, I believe that.

Why does every technology cycle feel different — and why this one actually is?

Every major technology wave comes with a version of the same claim: this changes everything. And in most cases, the claim is both true and overstated. The internet did change everything. It also took fifteen years to fully manifest in mainstream business practice, and the "everything" it changed was more narrowly defined than the early evangelists suggested.

The pattern I've observed across multiple cycles is consistent: initial excitement, early adoption by the technically bold, a reality check when the hard implementation challenges emerge, gradual maturation, and eventually mainstream adoption that is genuinely transformative but slower and less dramatic than the peak of the hype cycle suggested.

AI is following this pattern. But there are three things about this wave that make it substantively different from everything I've worked with before, and they have direct implications for how mid-sized businesses should be thinking about their response.

What have 30 years of technology change taught me about adoption?

The most consistent lesson from 30 years of digital product development is that technology succeeds when it solves real problems for real people and fails when it's deployed for its own sake. This sounds obvious, but it's violated constantly.

I've seen large organisations spend years and significant capital on technology implementations that never delivered meaningful business outcomes because the adoption question was never properly answered. The technology worked. The people didn't change. And a technology that nobody uses delivers no value regardless of its capability.

The second consistent lesson is that the human side of technology adoption is always the harder problem. Technical implementation, while complex, is typically more predictable than people change. The businesses that succeed with technology consistently invest disproportionately in change management, training, and culture — not just infrastructure and software.

The third lesson is that the organisations that build genuine capability — rather than buying a solution and assuming it will work — compound their advantage over time. The businesses I've seen succeed with every major technology wave are those that developed internal expertise, not just vendor relationships.

How is AI different from every previous technology wave?

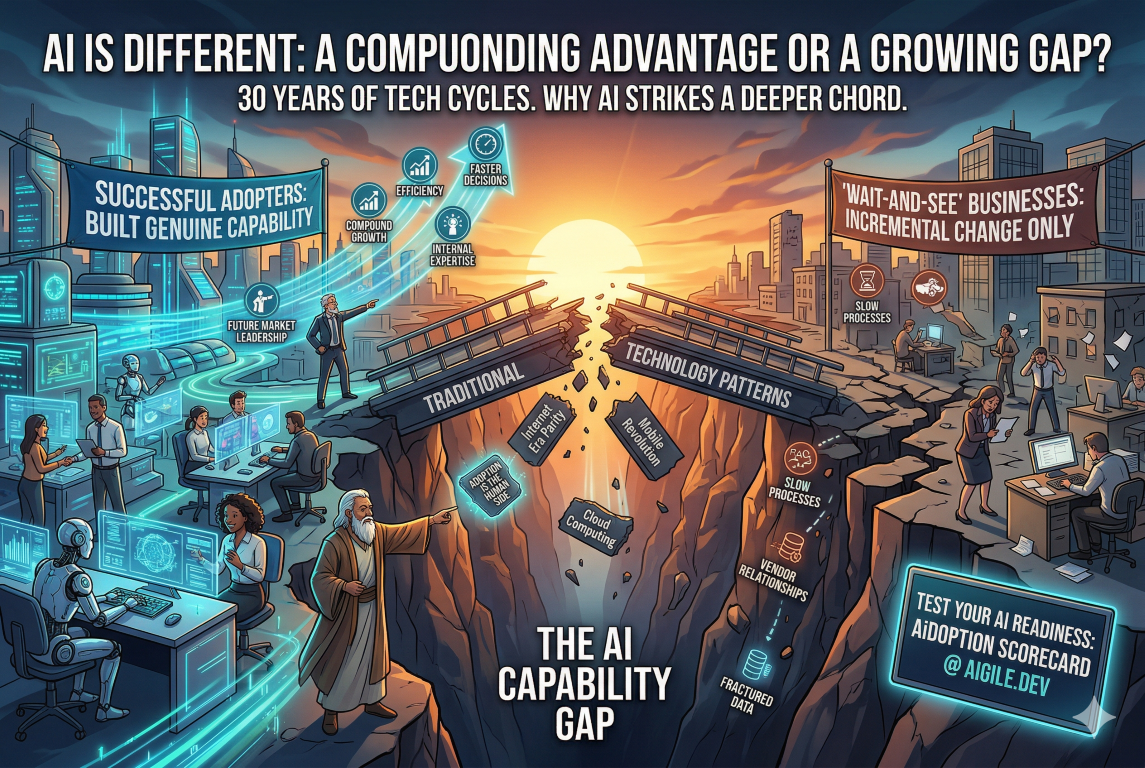

Three things distinguish this wave from what came before.

First, the breadth of application is unprecedented. Previous technology waves had wide but ultimately bounded impacts. The internet transformed information exchange and commerce. Mobile transformed communication and location-based services. AI has the potential to augment almost every cognitive task performed in almost every business function. The scope is genuinely different.

Second, the pace of capability development is accelerating in a way that previous technology waves didn't. The internet developed quickly. But the capability curve for AI — the rate at which systems are becoming more capable — is steeper and shows fewer signs of plateauing. Businesses are in a position where the landscape is changing under their feet in real time, not just at product launch cycles.

Third, AI is for the first time creating a meaningful capability gap between businesses that adopt it well and those that don't at the level of everyday operational work. Previous technology waves largely created parity — when everyone has a website, having a website doesn't differentiate you. AI, done well, builds into a compounding advantage: better processes, better decisions, better products, delivered faster, at lower cost. That gap, once established, is hard to close.

What does this mean for business leaders today?

It means the strategic response can't be "wait and see." The businesses that wait for AI to mature before engaging will find themselves behind a curve that's already steep and getting steeper.

It also doesn't mean running at every AI opportunity simultaneously. The businesses I've seen succeed with major technology transitions are those that make deliberate, strategic choices about where to focus, build deep capability in those areas, and then expand from a position of genuine competence rather than scattered experimentation.

The question isn't whether to adopt AI. It's how to do it in a way that builds lasting capability — in your strategy, your technology, and most importantly, your people. Those three things together are what turn AI investment into business outcomes that actually show up in your results.

That's why I founded AiGILE. Not to sell technology, but to help businesses build the genuine AI capability that I've seen make the difference, over and over, for thirty years.

Frequently Asked Questions

Is AI really different from previous automation waves? Yes, in a meaningful way. Previous automation waves primarily replaced repetitive physical tasks (manufacturing) or highly structured cognitive tasks (data processing). AI can augment and in some cases replace judgment-intensive, unstructured cognitive work — writing, analysis, design, strategy support, and customer interaction. The scope of what can be affected is substantially broader than previous automation.

How long will it take for AI to fully transform most businesses? Based on historical technology adoption patterns, mainstream transformation of business operations will take 7–15 years. But the leading adopters will build substantial advantages within 2–5 years that will be very difficult for laggards to close. The question isn't about the end state — it's about where you want to be in the competitive landscape in three to five years.

What's the first thing a business leader should do about AI today? Understand where your business currently stands. Not with a gut feeling, but with a structured assessment across strategy, technology, and people dimensions. You can't make good decisions about where to invest without an honest baseline. The AiDOPTION Scorecard is designed to give you exactly that — a clear, specific picture of your current AI readiness and where the priority gaps are.

Not sure where your business stands with AI?

Find out your AiDOPTION Score — a free 10-minute diagnostic that measures your AI readiness across Strategy, Technology, and People. You'll get a personalised score and practical recommendations.

Build, Buy, or Augment? How to Make the Right AI Technology Decision

Most AI adoption conversations start with technology. Which platform? Which tools? How much compute? It's understandable — the technology is genuinely exciting, and it's the most visible part of the process. But it's rarely where AI adoption actually breaks down.

One of the most consequential decisions a mid-sized business makes on its AI journey is also one of the least discussed: should you build your own AI capabilities, buy off-the-shelf AI tools, or augment your existing systems with AI? Get this decision right and your AI investments compound over time. Get it wrong, and you can spend 12–18 months on the wrong path before realising it.

The good news is that this decision is more straightforward than it looks, once you understand the trade-offs clearly.

What are the three AI technology options for mid-sized businesses?

The "build, buy, or augment" framework covers the three fundamental approaches to acquiring AI capability:

Build means developing custom AI solutions specifically for your business. This could mean training your own models, building custom AI-powered applications, or developing bespoke automations tailored to your exact workflows and data.

Buy means purchasing off-the-shelf AI products — tools, platforms, or SaaS solutions that come with AI capabilities built in. This category now includes most major business software: CRMs with AI features, analytics platforms with predictive capabilities, and dedicated AI tools for specific functions.

Augment means adding AI capabilities to your existing systems — integrating AI APIs into current platforms, layering AI assistants onto existing workflows, or connecting existing tools to AI services without replacing those tools.

The optimal strategy for most mid-sized businesses involves all three, applied to different parts of the business based on where each approach creates the most value.

When should you build custom AI?

Custom AI is the right choice when your competitive advantage depends on a capability that doesn't exist in off-the-shelf products — and where that capability relies on proprietary data or processes that are unique to your business.

The test is straightforward: if your competitors could buy the same tool and get the same outcome, buying is almost always more efficient. Custom AI makes sense when the specific way you do something — the data you have, the workflow you've developed, the domain knowledge you've accumulated — is itself the competitive asset.

The second consideration is scale. Custom AI development carries upfront cost and ongoing maintenance overhead. Unless the value of the outcome is substantial and sustainable, buying or augmenting will typically deliver better ROI. A good rule of thumb: if you can't articulate a specific, measurable business outcome that justifies the development cost within 12–18 months, it's probably not a build decision.

When should you buy off-the-shelf AI tools?

Buying is the right choice for capabilities that are genuinely commoditised — where the business need is standard and the market has already produced good solutions. AI tools for document summarisation, meeting transcription, customer service chatbots, and marketing content generation have become widely available, affordable, and capable. Building these from scratch would be a poor use of resources.

The hidden risk of buying is vendor dependency and cost creep. Many AI tools start with attractive pricing models that change significantly at scale, or as vendors add features. Due diligence should cover: total cost of ownership over three years (not just the subscription price), data portability (can you get your data out if you switch?), and integration compatibility with your existing systems.

The other buying risk is that off-the-shelf tools are designed for general use cases. They may not fit your specific workflow closely enough to deliver the productivity gains promised. Always pilot before committing to a significant tool purchase, and define the success criteria before the pilot starts.

What does "augment" mean in an AI technology context?

Augmentation is the most underappreciated option in the framework — and often the fastest path to value for mid-sized businesses. Rather than replacing existing systems (which is expensive and disruptive) or building new ones from scratch (which takes time and expertise), augmentation means adding AI capability to what you already have.

Practical examples include: connecting your CRM to an AI service that scores leads and recommends next actions, without replacing the CRM itself; adding an AI layer to your customer support platform that suggests responses to agents based on previous successful tickets; or integrating a document AI tool into your contract management process that flags clauses and surfaces relevant precedents.

The advantage of augmentation is that it works with your existing data, workflows, and systems rather than against them. The implementation risk is lower, the change management challenge is more contained, and the path to productivity gain is faster. For most mid-sized businesses, augmentation should be the default starting point before more significant build or buy decisions are made.

Frequently Asked Questions

How long does it take to build a custom AI? It varies significantly based on complexity, but a realistic timeline for a production-ready custom AI capability is 3–9 months from discovery to deployment, with ongoing iteration thereafter. Projects that try to compress this timeline significantly typically end up with technical debt that costs more to fix later. The build decision should only be made when the business case clearly justifies this timeline.

What are the hidden costs of off-the-shelf AI tools? The most common hidden costs are: per-seat pricing that escalates as adoption grows; data migration and integration costs that aren't included in the subscription; staff training time; ongoing management overhead; and eventual switching costs when the tool no longer meets your needs. Factor all of these into your cost comparison, not just the headline subscription price.

Can we start with buying and move to building later? Absolutely — and this is often the recommended path. Buying a capable off-the-shelf tool lets you understand the problem space, develop internal expertise, and generate data that might eventually support a custom build. Starting with buy also means you can generate business value while the custom capability is being developed, rather than waiting 6+ months before seeing any return.

Not sure where your business stands with AI?

Find out your AiDOPTION Score — a free 10-minute diagnostic that measures your AI readiness across Strategy, Technology, and People. You'll get a personalised score and practical recommendations.